I write spells (programs) that direct mana (electricity) through runes (logic gates) to conjure illusions (display furries on my screen)

And all of it is done through magical focuses (hardware) created out of transmuted stone (silicon) and metals.

It’s a magic crystal ball.

I keep conjuring these boring niche illusions because these weird townsfolk give me money every couple weeks as long as I keep doing it.

Hey, if it pays for the orgies, all is well!

Source - The Dragon (Dragon magazine) #10, Page 5 - https://www.annarchive.com/files/Drmg010.pdf

are you actually working on a project that displays furries on your screen right now? cause if so I am too and I wanna compare notes

You might like Ra by Sam Hughes

EE major here. All the equations in the third panel are classical electrodynamics. To explain the semiconductors needed to make the switches to make the gates in the second picture, you really need quantum mechanics. You can get away with “fudged” classical mechanics for approximate calculations, but diodes and transistors are bona fide quantum mechanical devices.

But it’s also magic lol.

Quantum Physics Postdoc here. Although technically correct this is also somewhat misleading. You need the band structure of solids, which is due to quantization and Pauli exclusion principle. The same quantum mechanics that explains why we did those strange electron energy levels for atoms in highschool. The majority of quantum mechanics, however, is not required: coherence, spin, entanglement, superposition. In the field we describe semiconductors as quantum 1.0, and devices that use entanglement and superposition (i.e. a quantum computer) as quantum 2.0, and smear everything else in-between. This

It’s the 1000 Lv. boss magic counter attack against the 100 Lv surprise attack, the complexity is just to much for our mind

Quantum Physics Postdoc here.

Can we trade?

Great write-up btw.

Can we trade?

Oh my sweet summer child, a 100x yes, if only it were possible.

But more seriously, if you’re doing EE, the world of quantum is your oyster. Specialize in RF/MW design and implementation, we use it for qubit control, and you’ll be highly valuable.

This what?

Oh no! The [clever quantum mechanics joke] got him!

Schrödinger opened the box 😱

[radioactive decay triggered the poison gas?]

[Quantum hype train?]

[Imposter syndrome?]

Interesting. Does tunneling fall under 1.0 or 2.0? Isn’t it considered a property of classical electrical engineering?

Good question. It would be application specific. I think evanescencnt wave coupling in EM radiation is considered " very classical" (whatever that actually means). But utilizing wave particle duality for tunneling devices is past quantum 1.0 (1.5 maybe?). However, superconductivity tunneling in Josephson junctions in a SQUID is closer to quantum 1.0, but 2.0 if used to generate entangled states for superconducting qbits for quantum computing.

Clear as mud right?

It is now that I’ve looked up the different types of tunneling you mentioned. I didn’t know there were multiple types of tunneling before now.

Thanks for the informative reply and prompting me to do some reading!

You’re talking the old CPU designs, not the current ones fighting tunneling effects or the work-in-progress photonics & 2D hybrids.

No, I am not sure that I am.

Photonic processing, whilst very cool and super exciting, is not a quantum thing… Maxwells equations are exceedingly classical.

As for the rest it’s transistor design optimisation, enabled predominantly by materials science and ASMLs EUV tech I guess:), but still exploits the same underlying ‘quantum 1.0’ physics.

Spintronics (which could be what you mean by 2D) is for sure in-between (1.5?), leveraging spin for low energy compute.

Quantum 2.0 is systems exploiting entanglement and superposition - i.e. qubits in a QPU (and a few quantum sensing applications).

Yes. You need specifically the condensed matter models, that have the added bonus of the equations making little sense without some interesting lattice designs at their side.

Semiconductors specifically have the extra nice feature of depending on time-dependent values, that everybody just hand-waves away and use time-independent ones because it’s too much magic.

Hmmm, so all computers are technically quantum computers?

Not at all. In a classical computer, the memory is based on bits of information which can either[1] in state 0 or state 1, and (assuming everything has been designed correctly) won’t exist in any other state than the allowed ones. Most classical computers work with binary.

In quantum computing, the memory is based on qubits, which are two-level quantum systems. A qubit can take any linear combination of quantum states |0⟩ and |1⟩. In quantum systems, linear combinations such as Ψ = α|0⟩ + β|1⟩ can use complex coefficients (α and β). Since α = |α|e^jθ is valid for any complex number, this indicates that quantum computing allows bits to have a phase with respect to each other. Geometrically, each bit of memory can “live” anywhere on the Bloch sphere, with |0⟩ at the “south pole” and |1⟩ at the “north pole”.

Quantum computing requires a whole new set of gates, and there’s issues with coherence that I frankly don’t 100% understand yet. And qubits are a whole lot harder to make than classical bits. But if we can find a way to make qubits available to everyone like classical bits are, then we’ll be able to get a lot more computing power.

The hardware works due to a quantum mechanical effect, but it is not “quantum” hardware because it doesn’t implement a two-bit quantum system.

[1] Classical computers can be designed with N-ary digit memory (for example, trinary can take states 0, 1, and 2), but binary is easier to design for.

then we’ll be able to get a lot more computing power.

I think that’s not quite true it depends on what you want to calculate. Some problems have more efficient algorithms for quantum computing (famously breaking RSA and other crypto algorithms). But something like a matrix multiplication probably won’t benefit.

It’s actually expected that matrix inversion will see a polynomial increase in speed, but with all the overhead of quantum computing, we only really get excited about exponential speedups such as in RSA decryption.

Classical computers compute using 0s and 1s which refer to something physical like voltage levels of 0v or 3.3v respectively. Quantum computers also compute using 0s and 1s that also refers to something physical, like the spin of an electron which can only be up or down. Although these qubits differ because with a classical bit, there is just one thing to “look at” (called “observables”) if you want to know its value. If I want to know the voltage level is 0 or 1 I can just take out my multimeter and check. There is just one single observable.

With a qubit, there are actually three observables: σx, σy, and σz. You can think of a qubit like a sphere where you can measure it along its x, y, or z axis. These often correspond in real life to real rotations, for example, you can measure electron spin using something called Stern-Gerlach apparatus and you can measure a different axis by physically rotating the whole apparatus.

How can a single 0 or 1 be associated with three different observables? Well, the qubit can only have a single 0 or 1 at a time, so, let’s say, you measure its value on the z-axis, so you measure σz, and you get 0 or 1, then the qubit ceases to have values for σx or σy. They just don’t exist anymore. If you then go measure, let’s say, σx, then you will get something entirely random, and then the value for σz will cease to exist. So it can only hold one bit of information at a time, but measuring it on a different axis will “interfere” with that information.

It’s thus not possible to actually know the values for all the different observables because only one exists at a time, but you can also use them in logic gates where one depends on an axis with no value. For example, if you measure a qubit on the σz axis, you can then pass it through a logic gate where it will flip a second qubit or not flip it because on whether or not σx is 0 or 1. Of course, if you measured σz, then σx has no value, so you can’t say whether or not it will flip the other qubit, but you can say that they would be correlated with one another (if σx is 0 then it will not flip it, if it is 1 then it will, and thus they are related to one another). This is basically what entanglement is.

Because you cannot know the outcome when you have certain interactions like this, you can only model the system probabilistically based on the information you do know, and because measuring qubits on one axis erases its value on all others, then some information you know about the system can interfere with (cancel out) other information you know about it. Waves also can interfere with each other, and so oddly enough, it turns out you can model how your predictions of the system evolve over the computation using a wave function which then can be used to derive a probability distribution of the results.

What is even more interesting is that if you have a system like this where you have to model it using a wave function, it turns out it can in principle execute certain algorithms exponentially faster than classical computers. So they are definitely nowhere near the same as classical computers. Their complexity scales up exponentially when trying to simulate quantum computers on a classical computer. Every additional qubit doubles the complexity, and thus it becomes really difficult to even simulate small numbers of qubits. I built my own simulator in C and it uses 45 gigabytes of RAM to simulate just 16. I think the world record is literally only like 56.

No. There are mechanical computers, based on mechanical moving parts, but they have been outdated since 1960.

If you don’t think “it’s magic” after emag theory… At a certain point the math does start to read like spell craft.

“Yes, yes, but what ratio of turns do we need in the transformer?”

Transistors are basically magic, when you get down to the chemistry of them. But when you get up the “current here means current out there” part it becomes understandable again. Then you get to logic gates, and maybe up to basic addition and other operations. And all understanding disappears when you get to programmable circuits which run on pure magic once again.

And if you put the 200 layers of abstractions, indirections and quirks on top the transistors, it’s magic again.

I was a signal integrity engineer so emag theory was my job for a while.

Honestly, magic is quite right. At the base level, how these fields are created and how electrons moving around results in these rules is just the magic of how our universe works.

You can discover the rules to live by, but why it works that way gets smaller and smaller until it’s magic.

To a certain extent, it actually is magic. We still don’t fully understand the “why” of magnetism, just the “how”

Yeah sure but then if you dig down into the standard model of particle physics and quantum field theory to get the REAL answers, you learn that this phone I’m trying not to drop on my face is just a bunch of energy field waves kind of intersecting and coupling in just the right way and… shit nevermind. It’s magic all the way down.

It’s far more complicated than the last panel makes it out to be.

Ah if only it was a 2 instead of 3 in your name…

It’s magic, and we are enchanting engraved runes.

Photolithography machines are basically laser printers. So we’re printing these SoCs.

I feel like there is a pretty big gap between understanding how logic gates and truth tables work and understanding the underlying physics of how modern processors work.

So crowdstrike was really the digital wizard equivalent of trying to cast “protectum de rectum” and accidentally casting “shid pants” combined with “butt crackus impenetrous”?

Now it all makes sense!

Removed by mod

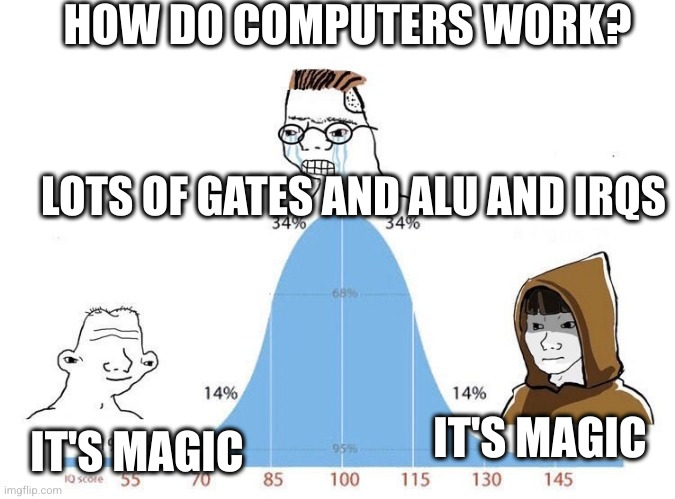

I think the difference between the first and second is whether you have a deep understanding of how high level languages translate into hardware operations. If you’re a novice how that translation works might as well be magic.

The second panel understands how that translation exactly happens and then it absolutely makes sense.

The third one is the next step where you have an deep understanding how the underlying physical phenomen makes computers work, and again that might as well be magic because explaining it is like explaining magic.

Yeah. I’m currently at the beginning of the second stage, knowing boolean algebra, logic gates and dibbling into parsers and language design, however most of that physics looks like magic to me.

Removed by mod

The simplest programmable Turing complete system is the rule 110 automaton.

Removed by mod

Removed by mod

Removed by mod

Removed by mod

Removed by mod

Well modern CPU design requires quite a lot of physics I think

A lot of physics and chemistry, I’d say at least as far back as the early 80s. Using lasers to cut silicon at the nanometer scale is real magic

Removed by mod

Computer Engineer but ok

Computer Scientist but ok

Any study on a lower level than the mathematics of information processing is under the realm of computer engineering. Computer science is more of a mathematical discipline than anything else, Computer Engineering is more of the intersection of electrical engineering and computer science than anything else.

Computer scientists wouldn’t do any research about the electromagnetics of how a computer works, they just trust that the electromagnetic design of the hardware creates a valid Turing machine for them to design programs for.

Computer Magician but ok

Hates computers but ok

Computer lover but ok

deleted by creator

Each level looks like something I’d expect an undergrad to have at least seen before to be honest.

This is a great watch: https://youtube.com/playlist?list=PLowKtXNTBypGqImE405J2565dvjafglHU&si=f-jWdjiFCkVjmgDH

Thank you for my next week at work.

What’s the physics behind the giant perky fur tits and how is the collar staying closed.

It’s magic.

estrogen

I initially thought the first panel should be “switches!”

Switchcraft!